AWS Introduces a Flurry of New EC2 Instances at re:Invent

(Michael Vi/Shutterstock)

AWS has announced three new Amazon Elastic Compute Cloud (Amazon EC2) instances powered by AWS-designed chips, as well as several new Intel-powered instances at its AWS re:Invent 2022 event in Las Vegas.

The first new offering is the Hpc7g instance, featuring new AWS Graviton3E chips, which the company says offers 2x better floating-point performance than current C6gn instances and up to 20% higher performance compared to current Hpc6a instances. AWS says its network-optimized instances (e.g., C5n, R5n, M5n, and C6gn) have thus far been sufficient for scientific, engineering, and research HPC workloads, but emerging artificial intelligence use cases require further scalability and cost reduction, as these workloads can scale to tens of thousands of cores or more.

The company says the Hpc7g instances provide high-memory bandwidth and 200gb/s of Elastic Fabric Adapter network bandwidth and can be used with AWS ParallelCluster to provision Hpc7g instances with other instance types, allowing customers to run different workloads within the same HPC cluster. The Hpc7g instances will be available in multiple sizes with up to 64 vCPUs and 128 GiB of memory.

AWS EC2 C7gn instances are powered Graviton3E processors, based on the Arm architecture, and the latest Nitro cards. Source: AWS

The second new addition is the C7gn instance. It combines the Graviton3E processors with AWS Nitro cards, which reduce the I/O load on the CPU. Compared to current networking-optimized instances, AWS says C7gn offers up to 2x the network bandwidth and up to 50% higher packet-processing-per-second. For network-intensive workloads such as network virtual appliances and data encryption, customers may require increased packet-per-second performance, higher network bandwidth, and faster cryptographic performance. AWS says its C7gn instances deliver up to 25% better compute performance and up to 2x faster performance for cryptographic workloads compared to C6gn instances. C7gn instances are available in preview starting today.

For deep learning purposes there are Inf2 instances, with new AWS Inferentia2 chips. These instances were built to run large models with up to 175 billion parameters and offer up to 4x the throughput and 10x lower latency compared to current Inf1 instances, according to AWS. The company says this new release is in response to demand from data scientists and machine learning engineers who are building ever larger and more complex deep learning models such as large language models with over 100 billion parameters.

“While training receives a lot of attention, inference accounts for the majority of complexity and cost (i.e., for every $1 spent on training, up to $9 is spent on inference) of running machine learning in production, which can limit its use and stall customer innovation. Customers want to use state-of-the-art deep learning models in their applications at scale, but they are constrained by high compute costs,” AWS said in a release.

AWS touts its Inf2 instance as the first inference-optimized EC2 instance that supports distributed inference, a technique that spreads large models across several chips for better performance. Inf2 instances also support stochastic rounding, a way of rounding probabilistically that enables high performance and higher accuracy as compared to legacy rounding modes. Inf2 instances offer up to 4x the throughput and up to 10x lower latency compared to current-generation Inf1 instances, and they also offer up to 45% better performance per watt compared to GPU-based instances, says AWS. Inf2 instances are available today in preview.

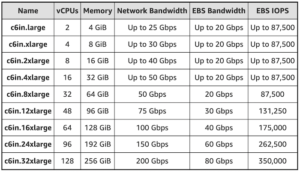

In addition to the instances powered by AWS-designed chips, the company also announced the next generation of general purpose, compute-optimized, and memory-optimized instances powered by 3rd generation Intel Xeon Scalable processors (Ice Lake) running at 3.5 GHz with up to 200gb/s network bandwidth. AWS says the Ice Lake processors offer a 1.46x average performance gain over the prior generation.

The M6in and M6idn general purpose instances, ideal for applications that use compute, memory, and networking resources in equal proportions such as web severs and code repositories, are each available in nine sizes. They are available in the US East (Ohio, N. Virginia) and Europe (Ireland) regions in On-Demand and Spot form.

There are also new C6in compute-optimized instances that are designed to handle up to twice as many packets per second as preceding instances: “With more network bandwidth and PPS on tap, heavy-duty analytics applications that retrieve and store massive amounts of data and objects from Amazon Amazon Simple Storage Service (Amazon S3) or data lakes will benefit. For workloads that benefit from low latency local storage, the disk versions of the new instances offer twice as much instance storage versus previous generation,” said AWS Chief Evangelist Jeff Barr in a blog post.

For workloads that process large data sets in memory, there are the new r6in and r6idn instances that come in nine sizes and are available in the US East (Ohio, N. Virginia), US West (Oregon), and Europe (Ireland) regions in On-Demand and Spot form. Barr says the higher network and EBS performance of the r6in instances will allow customers to scale their network-intensive SQL, NoSQL, and in-memory database workloads, with the option to use the r6idn when they need low-latency local storage.

The company also unveiled new Hpc6id instances powered by Intel Ice Lake processors and built for tightly coupled HPC workloads. AWS asserts that Hpc6id instances have the best per-vCPU HPC performance when compared to similar x86-based EC2 instances for data-intensive HPC workloads. The company states that customers running license-bound scenarios can lower infrastructure and HPC software licensing costs using the Hpc6id instance. Additionally, customers using HPC codes optimized for Intel-specific features, such as Math Kernel Library, can migrate large HPC workloads to Hpc6id and scale up—cluster sizes range up to tens of thousands of cores—through 200gb/s EFA bandwidth, consolidating HPC projects on fewer nodes with faster job completion time. Read more technical details about Hpc6id at this link.

AWS also announced memory-optimized, high-frequency R7iz instances powered by 4th generation Intel Xeon Scalable processors, also known as Sapphire Rapids. Among x86-based EC2 instances, these have the highest performance per vCPU and deliver up to 20% higher performance than z1d instances, says AWS. The company touts R7iz instances, with support for DDR5 memory, as ideal for front-end Electronic Design Automation (EDA), relational databases with high per-core licensing fees, financial, actuarial, data analytics simulations, and other workloads requiring a combination of high compute performance and high memory footprint. Interested customers can sign up for a preview here.

“Data center customers are looking to keep up with demand, increase business value of their data and improve their overall costs,” said Lisa Spelman, corporate vice president and general manager of Intel Xeon Products. “AWS is delivering new instances that leverage the accelerated performance and security of 4th Gen Intel Xeon to support the most demanding workloads.”

This story has been updated to include information about the Hpc6id EC2 instance.

Related Items:

AWS Report Explores the Priorities of Today’s CDO

AWS Adds a Little More Nitro to Its SSDs

AWS Launches Amazon Neptune Serverless